GAB welcomes this post by John Chevis, a former member of the Australian Federal Police, former accountant, and current member of the Intelligent Systems for State and Societal Resilience Hub at the University of New South Wales. As John explains below, he and colleagues at South Wales have developed an AI tool for producing National Anti-Money Laundering Risk Assessments. They are looking for those interested either in using it to conduct an NRA or supporting its further development. John and team can be reached at j.chevis@unsw.edu.au or johnchevis1@gmail.com

Using Artificial Intelligence to comply with the Financial Action Task Force’s directive to conduct a National Risk Assessment – an exercise to “identify, assess, and understand the money laundering and terrorist financing risks” member states face – would seem obvious.

Financial Intelligence Units collect thousands, in larger countries millions, of reports banks and financial institutions submit about possible money laundering by customers. But a database of what is variously termed Suspicious Transaction or Suspicious Matter or Suspicious Activity Reports is by no means the only source for determining the money laundering risks a nation is exposed to. Other databases with millions of potentially useful records include those on cash transactions, company ownership, land titles, court cases, police investigations, and Politically Exposed Persons. There are also media accounts and social media posts. All grist for an NRA mill.

That’s where AI in the form of Large Language Models comes in. An LLM can sort through massive, unstructured datasets to identify patterns, trends, and anomalies, extracting relationships that human analysts with the most advanced mathematic tools might take years to spot — if ever. When brought to bear on data available to an FIU, the resulting analysis will not only highlight vulnerabilities in the nation’s anti-money laundering regime but provide investigative leads for law enforcement. Precisely the objectives of a National Risk Assessment.

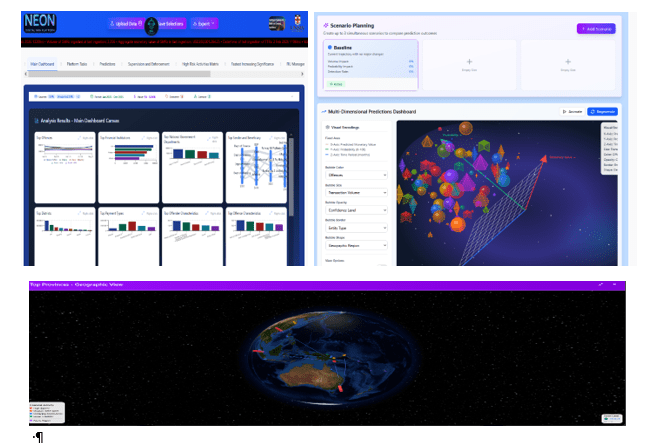

Despite the obvious value of turning an LLM loose on FIU data, our team at the University of New South Wales is, to our knowledge, the first to apply AI to producing an effective digital National Risk Assessment. Funded by the Australian Department of Foreign Affairs and built for the Papua New Guinea financial intelligence unit, it is called “Neon.” Here is how it works.

Neon uses a ‘captive’ Large Language Model called Ollama. As a captive LLM, that means it operates within a secure, closed private environment ensuring data privacy and security. It reads Suspicious Matter Reports (SMRs) it is fed to identify the offence, offender characteristics, offence characteristics, companies, sectors and a range of other information. That data is combined with the details of company registrations; threshold transaction reports; and other data sources to deliver charts, tables, link diagrams and Sankey Diagrams.

Neon applies the aggregated monetary value from SMRs to calculate the significance of almost everything mentioned in the data – be that companies, government departments, State-Owned enterprises, Politically Exposed Persons, addresses, payment types, money laundering methods, currencies and so forth.

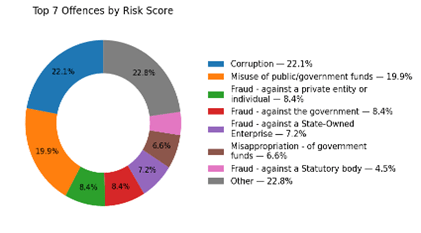

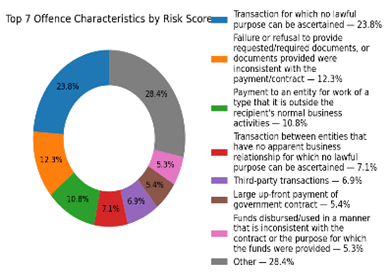

This supports the objective identification of the most significant threats/offences and vulnerabilities. The chart above shows the relative significance of the top seven most significant offences. And the chart below shows an absolute measure of the significance of a particular vulnerability, being ‘Offence Characteristics’.

Furthermore, Neon uses the financial flows between those elements to show the intersections, correlations and links between offences (threats’) and vulnerabilities (such as government departments, banks, law firms, payment methods etc.)

This approach follows the FATF suggestion that member states should identify and quantify the intersection of the most significant threats with the most significant related vulnerabilities, requiring countries to measure the significance of vulnerabilities as well as threats – something that has been an almost insurmountable challenge until now.

In making the choice to use monetary value as the primary measure of significance, the UNSW team drew on the work of Joras Ferwerda and Peter Reuter published by the World Bank in 2022 (here). We found that step opened up opportunities for the application of a vast array of AI, machine learning, mathematical and statistical operations to be applied to the problem of detecting and addressing financially motivated crime.

For example, use of monetary value allowed the Neon platform to highlight to users apparent ‘unreported Suspicious Matters’ (that is, transactions and events that by law should have been reported but were not) thereby reducing a country’s sole reliance on the goodwill and motivation of banks for the detection of money laundering and predicate offending.

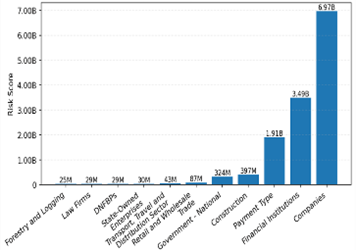

It has also allowed Neon to compare elements in absolute terms -removing the ambiguity that surrounds “high”, “medium” and “low” measures. So, for example, Neon can show that an offence, sector or company is not just ‘more’ significant than the next element in line, but it can show that it is twice as significant, or ten thousand times more significant. In the chart above ‘companies’ are identified as about 17 times more significant than the sector ‘construction’ and 240 times more significant than law firms.

Importantly, in relation to corruption offenses, users can see which Politically Exposed Persons, government departments, State-Owned Enterprises and statutory Authorities are linked by monetary flows to the most significant types of corruption.

Could Neon Tell Policy-Makers Whether They’ve Been Effective in Their AML Efforts?

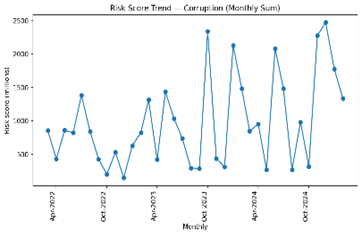

Tracking temporal changes is crucial to the evaluation of the effectiveness of AML policies and interventions.

Neon shows users the changes over time in individual elements – such as a particular financial institution or a particular offence (as seen in the chart below, showing changes in the significance of the ‘corruption’ offence) – as well as in the intersections of those elements. For example, Neon can also show the changes in significance of the intersection of a particular offence; a particular geographic location; and a particular government department.

This measurement of intersectionality addresses the FATF requirement for countries to measure money laundering risk in terms of “where a threat exploits a vulnerability producing a consequence” as well as providing invaluable information for policymakers and law enforcement.

Large Language Models Are Everywhere – But Not Ones That Securely Handle Sensitive Data

One of the key challenges that the UNSW team have overcome is the application of AI in a ‘captive’ space.

Given the sensitivity of the data, the LLM used by a data-driven NRA such as Neon can’t just reach out to the cloud every time it needs to build its vocabulary. Such a situation would make the heads of FIU’s (and the reporting entities from which the data is derived) nervous about data leakage. To overcome this problem, the team has used Ollama and trained it on actual Suspicious Matter Reports to identify offences, offender characteristics, offence characteristics and sectors.

While there is always room for improvement, Ollama has proven itself to be pretty good so far at identifying these things from free-form text contained in Suspicious Matter Reports and PEPs lists.

But You’re Only Using a Limited Dataset…. So How Can You Rely on the Outputs?

An argument against the use of financial data in this way is that such data cannot capture every possible instance of offending, and therefore the output may not accurately reflect the financial-crime landscape. A truncated dataset, it may be argued, can lead to inaccurate conclusions and erroneous policy decisions.

This assertion is, of course, absolutely correct. To use the old adage, “all models are inaccurate, but some are useful”.

Even though the data Neon ingests may not cover the entire gamut of offenses, no NRA does. However, a digital NRA based on large volumes of verifiable data is inarguably more reliable than opinion-based NRAs. And the process is transparent about the topics and areas that it doesn’t address- allowing stakeholders to make suitable policy adjustments, or plan for additional data collection.

But That’s Not a National Risk Assessment….it Looks More Like an Intelligence Product!

Neon doesn’t look like your typical NRA. Firstly, it is not a moldy old document that is out of date before it is even released. It is a digital platform. Sure, it can produce tailored reports via an auto-report writer – it can even produce the first drafts of ‘close-hold’ and ‘public’ versions of an NRA, but that’s not what makes it happy. What makes Neon “happy” (or at least its human developers – who are capable of emotion) is for it to deliver novel, accurate, near-real-time insights directly to stakeholders in multiple formats. Those insights are drawn from vast volumes of reliable data, providing the national-level overview required for policy formulation and the operational and tactical level intelligence that supports operational planning.

Where To Next for Neon

Having proven the concept with Papua New Guinea, the UNSW team are seeking to provide Neon to other countries. The blueprint for the development of the Neon Digital NRA Platform is pictured below. A link to the page is here.

The blueprint includes plans for an expansion of the user interface as well as expansion into predictive analysis using machine learning and allowing users to test scenarios against the data.

Curious to understand – if the data used is Suspicious Matter Reports, wouldn’t it mean that it’s not all substantiated? I think one of the issues of FIUs globally is that the they receive so many STRs/ SMRs that it is impossible to investigate all of them. As a result, if the NRA uses SMRs data where that data was not investigated – wouldn’t it be reflective more of what the financial institutions think could be suspicious, instead of focusing on real problems / predicate offences? I’ve seen various estimates (more like guesstimates) and – in some cases – it’s estimated that more than half of the reports submitted to FIUs are actually not substantiated.

Good question. Passed it on to the author

Hi Ruta,

Thank you for that question. You make a very, very good point.

Most SMRs are never used for anything – and certainly not for National Risk Assessments.

In my experience, that is because FIUs typically don’t have the human resources to read, review, categorise, analyse, substantiate/validate, or even disseminate, the vast majority of what they receive or collect.

In fact, many FIUs are so overwhelmed just doing the basics that they don’t have the time or resources to build a system like Neon to alleviate those issues.

‘Substantiation’ – as in filtering out large volumes of low-credibility defensive reports – is precisely the type of task that AI can perform at scale, thereby relieving the burden on analysts. And, as I type, the team at UNSW are working to further improve the ‘credibility assessment’ process in the Platform to achieve just that.

The ‘data investigation’ that you refer to – to ensure that the NRA does reflect and focus on real problems and the most significant predicate offences – is exactly the process that Neon is designed to address.

The ‘investigation’ process, built into Neon for that purpose, is designed to emulate the tasks that analysts perform during an initial investigation – such as cross-comparing SMRs received with other data (such as SMRs; threshold transactions; police reports; companies databases; PEPs lists; open-source reporting and the like). But, rather than just conducting that ‘investigation’ on a few selected SMRs, (as is currently the case in many FIUs) the Platform does it on all of them.

This ‘investigative’ process, and the significance/risk scoring that is applied, aims to ensure that the NRA that is produced, and the actions that follow, are based on the highest-quality credible, timely, probative data and information available.

John Chevis

Really interesting read! It’s amazing to see AI being used in such a practical way for AML risk assessments. Makes the whole process feel much more manageable.

gtu